How Voters Respond to Crime Control Policies

3 July 2020

For a Better Intergenerational Circulation of Assets

3 July 2020by Virginie Tournay, CEVIPOF*

The prominence of digital technology and algorithms in collective life is often analyzed from a technological, organizational and economic perspective; but the way in which artificial intelligence is substantively transforming the political world is more rarely discussed. It is nonetheless a fundamental issue. Rather than seeing the globalization of these tools as an expression of neo-capitalist excess, my book L’intelligence artificielle. Les enjeux politiques de l’amélioration des capacités humaines (Ellipses, March 2020) views the phenomenon as a historical transition. That transition, while it marks a radical renewal in ways of understanding collective life, reflects a constant of humanity: the desire to improve human capacities on both an individual and a social level. I present an overview of existing work with the idea of better understanding how our digital civilization is taking shape.

The prominence of digital technology and algorithms in collective life is often analyzed from a technological, organizational and economic perspective; but the way in which artificial intelligence is substantively transforming the political world is more rarely discussed. It is nonetheless a fundamental issue. Rather than seeing the globalization of these tools as an expression of neo-capitalist excess, my book L’intelligence artificielle. Les enjeux politiques de l’amélioration des capacités humaines (Ellipses, March 2020) views the phenomenon as a historical transition. That transition, while it marks a radical renewal in ways of understanding collective life, reflects a constant of humanity: the desire to improve human capacities on both an individual and a social level. I present an overview of existing work with the idea of better understanding how our digital civilization is taking shape.

Artificial Intelligence: A Revolution in the Organisation and Perception of our Societies

© Pixabay

The digital revolution has accelerated over the past decade, attesting to the transformation of our collective perception of the world around us. It now moves through the filter of algorithms that are increasingly numerous and invisible to users of connected objects and services; it goes hand in hand with a new economy marked by the rise of platforms that define its organisational components. This is the case for the internet giants, including the American GAFAM companies (for Google, Apple, Facebook, Amazon and Microsoft) that dominate the market by their presence throughout the Internet and Web infrastructure. Platforms such as Uber in transportation, Twitter in social networks and Netflix in audiovisual culture, while newer, are experiencing exponential growth. Their strength lies in relying on ‘‘customer experience’’ to develop increasingly specific individualised services. Other actors, such as the Chinese firms Baidu, Alibaba, Tencent and Xiaomi, have made themselves more and more indispensable. Apart from the developments in computer programming, the digital revolution has mainly been organisational and managerial in nature. It is characterised by a new information economy and a reworking of citizen relations to this ‘‘digital’’ Republic. From the opening up of public data to the use of digital tools in sovereign state functions, as well as in defense, legal information and public services, algorithms have impacted the shaping of our shared world and the democratic process itself.

A Working Definition: Algorithms at the Heart of Decision-Making

© Pexels

Defined in 1956 by experts in the field, artificial intelligence began as an academic discipline with the aim of simulating and artificially reconstructing cognitive processes through ‘‘thinking machines’’. Today, that historical perspective familiar to specialists is linked to a social definition, which makes it a more difficult subject to document in a way that is both precise and comprehensive. Drawing from mathematician Gilles Savard’s definition, artificial intelligence involves everything that pertains to algorithmics, in other words it refers to a series of instructions applied to huge volumes of data. These are the software and technical systems that provide additional features for phones, computers, robots, etc. This process may lead to ascribing cognitive abilities to machines such as machine learning, visual recognition or machine translation.

The generic term artificial intelligence refers as much to algorithmic methodologies as to the tools’ areas of application. But, beyond this working definition, the notion of artificial intelligence remains difficult to grasp due to its multiple types of production and uses. From algorithms used to predict epidemics to those in the cultural industry that create recommendations for books (Amazon) or films (Netflix), their production, use and regulation clearly do not mobilise the same actors, shared goals, or even a common technical infrastructure.

In order to grasp the political implications of artificial intelligence, we must examine the various practices impacted by these uses and contextualise them in their relationship to time and space, while incorporating the materiality and impact of these ‘‘high-performance’’ decision-making support systems. Using algorithms to identify criminogenic areas detected by the police or quantifying the likely evolution of a complex pathology raises issues of responsibility. Does the responsibility involve the machine, or should it be assumed by the doctor? Will these new ways of monitoring offenses be favored at the expense of more traditional methods? It is also important to distinguish the political effects that have resulted from the new ‘‘algorithmic’’ information economy from concerns related to the diversification of human-machine interfaces. The transformations underway on these two levels are very different in nature. In the first case, artificial intelligence is a confirmed trend that is destined to last. The availability and accessibility of data (as provided on the website data.gouv.fr, the French public data platform, in accordance with the 2016 loi sur la République numérique, or Digital Republic Act), its processing by different operators, as well as real-time monitoring of activities with ever increasing precision, have fostered political rationales the full consequences of which are still to be gauged.

DNA © Elias Sch. Pixabay

In the second case, even though these medical systems have been the source of significant advances (renal dialysis, defibrillators, diagnostic assistance, follow-up of chronic pathologies such as diabetes, neurostimulation, etc.), the relationship between men and machines is more an issue of media sensationalism around ‘‘enhanced humans’’ or transhumanism.

The Relevance of Artificial Intelligence and its Political Implications

While the history of artificial intelligence is inseparable from major advances in computer science in recent decades, its development is no longer limited to the discipline’s current level of knowledge. Artificial intelligence has become a social matter because these applications now affect all citizens. It is also connected to the history of information and its major disruptions.

Shenzhen – April 2018 Facial Recognition Technology at street to identify jaywalkers and automatically issue them fines by text © StreetVJ, Shutterstock

The acceleration of information transmission through the Internet and the transformation of citizens – who, online, have become producers, informants and receivers of media narratives – testifies to the importance of sorting and recognition algorithms in the structuring of digital crowds and the recomposition of the public sphere. As a potential instrument of social control, algorithms contribute to the structuring of forms of public opinion and the means of measuring it. Is politics dissolving in algorithmic expertise?

Artificial intelligence is a political matter because these developments challenge the organisation of society (polity), its political communication (politics) and public policies. Numerous reports from public institutions have been published in the past three years. For the most part, they strive to characterise general trends in the development of these technologies, distinguishing them from those that are pure speculation. The right tone is hard to find because collective representations of artificial intelligence are dual. On the one hand, they subvert the Promethean mindset by pitting man against machines, evoking abuses, dangers, or tsunamis; but, on the other hand, they are characterised by great promise and social expectations in health, education and energy. Understanding the implications of digital technology requires contextualising the quest for human improvement in a long-term historical perspective.

For a Collective Awareness of Algorithms in Everyday Life

Our daily activities are increasingly ‘‘mediated’’ by algorithms, whether we’re using a search engine to find some information, communicating on social networks or buying tickets for a performance online. Paradoxically, this massive appropriation of digital technology elicits very little democratic mobilisation or opposition. This ‘‘passivity’’ can be explained by the general effects of digital technology, often not noticeable on a collective level, but also because the news media themselves are dependent on algorithmic regulations.

Announcement about the StopCovid application on the website of the French Ministry of the Economy, June 2, 2020

The government’s use of algorithms occasionally sparks a debate, such as the digital application Parcoursup developed to pre-register candidates for admission to higher education, or the StopCovid mobile application designed to warn of possible contact with an infected person. However, there have been no social protests, nor any partisan divides around the widespread use of algorithms in society. This contrasts sharply with the media outpouring around promises related to artificial intelligence technologies.

Popularised under the ‘‘digital divide’’ banner, the incomplete democratisation of digital access and use has been highlighted in public and community rhetoric. Although a national strategy for an inclusive digital society was initiated in France as early as 2013, citizens’ accessibility to connectivity and the use of digital resources is far from complete. In the context of massive and rapid dematerialisation of public services, more than one in three French people in 2017 faced obstacles in the use of digital technology and were unable to carry out administrative procedures online. This divide proved particularly problematic during the lockdown, especially on an educational level.

Artificial Intelligence Practices: A Social Upheaval?

To provide some answers to this question, I started from an anthropological study of technical objects.

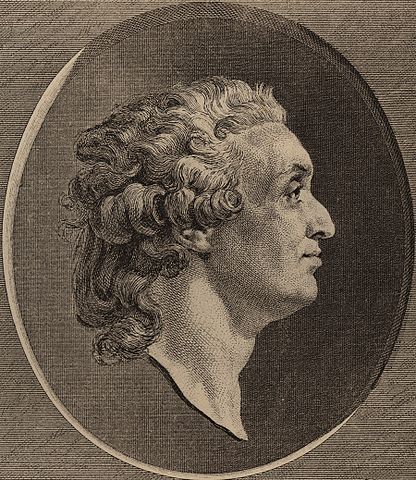

Marquis de Condorcet (1743-1794), French philosopher, mathematician, and political scientist, 1789. © Public Domain

The improvement of both individual and social human capacities is not a recent issue; indeed, it has been at the heart of man’s relationship to technology since the 18th century. Its social function is part of the particular ideal of the Enlightenment, notably ‘‘the indefinite perfectibility of the human mind” as expressed by Condorcet in his Sketch for a Historical Picture of the Progress of the Human Mind. Fundamental to his work, this notion of ‘‘perfectibility” lays out a threefold objective: the destruction of inequality among nations, the progress of equality within one people, and finally, the real perfecting of man. These three dimensions are implicit in the social expectations linked to the development of artificial intelligence. They reflect the founding myth of progress in modern societies, whose collective reactivation both frightens and fascinates. The social construct conveyed by artificial intelligence goes far beyond what is traditionally found in disruptive innovations (genetic engineering, nuclear technology, space exploration). Defining the political implications of artificial intelligence therefore involves examining the weight of founding myths and assessing the true political scope of this technological sphere by distinguishing what constitutes real progress from sensationalist declarations. Taking a long-term historical view, we can contrast true societal upheavals with what amount to extensions or repetitions of older controversies, such as those pertaining to the protection of privacy with video surveillance, the use of personal data by insurance companies or the regulation of ‘‘fake news”.

Integrating Artificial Intelligence Into Society

Developing a critical culture of algorithms is a social necessity, to quote Dominique Cardon. It must occur on several levels. The first is to emphasise that the development of such a culture is not free from societal norms, nor geopolitical relations of power, which are also cultural. This means that individual citizens must use their capacity for discernment and critical thinking in their everyday relations with the world. The second level involves giving a more balanced view of the predictive capabilities of algorithms by highlighting limitations that are both empirical (quality; completeness of databanks) and epistemological (an algorithm’s decision-making path cannot always be traced). Finally, if we address the regulation of artificial intelligence on a political level (algorithmic production, intervention and regulation), we still need to determine how to divide between what involves parliamentary policy (debates to be presented before national representatives) and what pertains to regulatory policy (sustained by state technostructures).

Only under such conditions can a working humanism be established around digital issues.

Translated by William Snow

Virginie Tournay is a CNRS research director at the Sciences Po Reference Center for Political Science (CEVIPOF). A biologist by training, she is also a sociologist, creating a partnership that is increasingly necessary between the so-called exact sciences and the social sciences. She is a member of the scientific council of OPECST (Parliamentary Office for Science and Technology Options Assessment). Armed with this knowledge, her research focuses on life science policies (regulating the biological body, medical and agricultural biotechnology, biodiversity) and the relationships between scientific expertise and public decision-making.